Continuing on our series of coverage of what happened at the recent MariaDB Server Fest 2020, now we will focus on the next talk by Seppo Jaakola, titled: Galera Clustering in MariaDB 10.5 and beyond.

A quick overview includes not just information about Galera 4 in MariaDB 10.4, but also the new features available in MariaDB Server 10.5 namely: GTID consistency, cluster error voting, XA transactions within the cluster, non-blocking DDL operations and Black box. There is also a focus on MariaDB Server 10.6 planning.

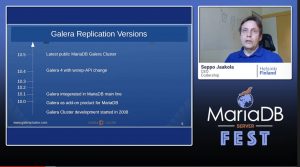

There have been much more wsrep-API changes (which require rolling upgrades) compared to major versions of Galera; currently Galera 4 is the latest major version, and the wsrep-API version that is the latest is 26. Rolling upgrades are key in Galera to ensure that when upgrades happen there is no downtime within the cluster at all. The biggest feature in Galera 4 is the ability to use streaming replication, which helps you execute large (greater than 2GB) and long running transactions. The feature was ready in MariaDB 10.3 but did not quite catch the release train, so it ended up in MariaDB 10.4.

Global Transaction ID (GTID) compatibility and consistency is a new feature implemented by Mario Karuza as there were GTID incompatibilities with Galera and MariaDB. Galera stores GTIDs as <uuid>:<sequence number> whereas MariaDB stores it as: <domain-id>:<node-id>:<sequence number>. Galera Cluster now uses the same domain and node ID, and the software now only stores and shows only the MariaDB format GTID. Galera Cluster can also operate as a secondary slave for a MariaDB primary master server and the GTID coming from the MariaDB master is also preserved in the Galera Cluster (you can find the same GTIDs in the binary log files). You can read more at: Using MariaDB GTIDs with MariaDB Galera Cluster.

Cluster Error Voting (started life as MDEV-17048) is a new feature implemented by Alexey Yurchenko, and it is a protocol for nodes to decide how the cluster will react to problems in replication. When one or several nodes have an issue to apply an incoming transactions (e.g. suspected inconsistency), this new feature helps. In a 5-node cluster, if 2-nodes failed to apply the transaction, they get removed and a DBA can go in to fix what went wrong so that the nodes can rejoin the cluster.

XA Transaction Support is a feature implemented by Daniele Sciaccia and Leandro Pacheco de Sousa and what we want here is for Galera Cluster to operate as a resource manager in an XA infrastructure. There is a transaction coordinator where Galera Cluster can operate as an XA Resource Manager, so that Galera Cluster should be able to prepare or rollback/commit a transaction. XA transactions are supported thanks to the implementation of streaming replication which is a foundation for it. The work has been ready for MariaDB 10.4, it was not accepted into the main tree, and it was likely targeted for MariaDB 10.5 but it still missed the train (since there was other work done by MariaDB Server for XA support and there was a conflict in the work between the two teams). So now it will be in MariaDB Server 10.6.

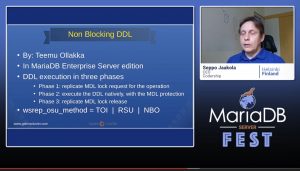

Non-blocking DDL is a feature implemented by Teemu Ollakka and it is only included in MariaDB Enterprise Server edition. Seppo decided not to go through the details of this feature since it is not in the regular MariaDB Server. This will be available in MariaDB Server eventually, but for now, it is an Enterprise only feature. You might be interested in the documentation for this: Performing Online Schema Changes with Galera Cluster.

Black Box is a feature implemented by Pekka Lampio, and it is also a MariaDB Enterprise Server edition feature. It allows you to store debug messages in a main memory (shm) ring buffer, and it helps with troubleshooting a crashed server or even in cluster testing.

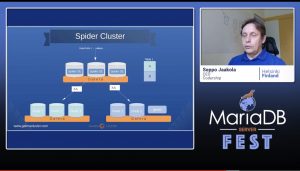

MariaDB 10.6 planning includes XA transaction support testing and documentation work as well as making it work with SPIDER cluster testing (since the SPIDER storage engine is part of MariaDB and also depends on XA support). This helps MariaDB 10.6 to become a “sharding cluster”. This will also enable extreme write scalability that could come from such a setup.

There is also ongoing work, around the test system improvements (this does not show up for the end user, but extending the test coverage is extremely important for development), dynamic SSL/TLS encryption (so you could change an SSL implementation at runtime) as well as further optimisations to streaming replication.

If you would like to see other new features in MariaDB 10.6 or even in Galera Cluster, do not hesitate to drop us a line to info@codership.com or even our GitHub.